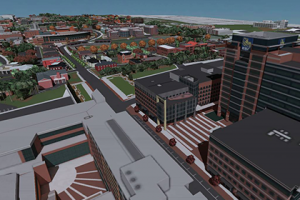

Applying driving simulators for in-vehicle research allows for a wide range of studies to be performed particularly when investigating cognitive demand and distraction caused by devices in the car. By using simulations, researchers can investigate driving behaviors in high-risk situations without putting participants or others in harm's way. Currently being conducted within the School of Psychology at Georgia Tech, in-vehicle research could provide more insight into behavior and increase in applicability if participants were able to drive in areas that they are familiar with. Specifically, research being done in coordination with the Atlanta Shepherd Center investigating the use of in-vehicle technologies to assist individuals who have had a Traumatic Brain Injury could benefit largely from these "real-location" maps. The Georgia Tech School of Architecture coincidentally has already developed a 3D model of the Georgia Tech campus and some of the surrounding areas including the Peachtree corridor (26 miles along Peachtree Street). However, in order to make this model usable within the simulator, it must be optimized and converted in a compatible format. Researchers in the School of Architecture and School of Psychology will be working on creating methods and conversion processes that will allow any 3D model to be integrated into the simulator. Development of this process of conversion will allow Georgia Tech to offer documentation and map-creation services to other researchers around the world assisting in increasing the applicability of in-vehicle research.

The Georgia Tech Sonification Lab is an interdisciplinary research group based in the School of Psychology and theSchool of Interactive Computing at Georgia Tech. Under the direction of Prof. Bruce Walker, the Sonification Lab focuses on the development and evaluation of auditory and multimodal interfaces, and the cognitive, psychophysical and practical aspects of auditory displays, paying particular attention to sonification. Special consideration is paid to Human Factors in the display of information in "complex task environments," such as the human-computer interfaces in cockpits, nuclear powerplants, in-vehicle infotainment displays, and in the space program.

[Random Image of Auditory Interface] Since we specialize in multimodal and auditory interfaces, we often work with people who cannot look at, or cannot see, traditional visual displays. This means we work on a lot of assistive technologies, especially for people with vision impairments. We study ways to enhance wayfinding and mobility, math and science education, entertainment, art, music, and participation in informal learning environments like zoos and aquariums.

The Lab includes students and researchers from all backgrounds, including psychology, computing, HCI, music, engineering, and architecture. Our research projects are collaborative efforts, often including empirical (lab) studies, software and hardware development, field studies, usabilty investigations, and focus group studies.