Fall and Spring • Technology Square Research Building

Explore GVU Center Research Showcase

Virtual reality, robotics, civic computing, information visualization and artificial intelligence are just a few of the research areas that come to life at the GVU Center Research Showcase and show how technology impacts the many ways society can benefit from these innovations.

The GVU Center Research Showcase invites you to experience Georgia Tech research in people-centered technology that enhances our communities and impacts how we live day-to-day. More than 100 interactive projects will let you touch, control and imagine what technology will enable in the future.

The GVU Center Research Showcase offers:

- Hands-on and interactive demonstrations to experience technology in a wide variety of formats.

- Research from more than 30 labs with specialties that cover broad areas such as mobile and wearable technology, digital media, transportation, online communities, artificial intelligence, game development, graphics, user interfaces and much more.

- Networking with industry representatives and access to researchers and their latest work.

- A range of innovative ideas, interfaces and devices that can only be experienced here.

Our relationship with personal technology and how we use it is evolving. Research through GVU's interdisciplinary teams shows the opportunities that exist for technology to address long-standing societal challenges and how we can make new connections to advance our lives and those of others.

What will shape your technology experience in the future? Come find out at the GVU Center.

Previous Showcase Highlights

Explore a galaxy far, far away without the hyperspace travel

Star Wars Escape is a VR experience that puts players in a holding cell, challenging them to use their wits and the environment to escape while adding a morally challenging twist. Players may even find an ally to help, if they can utilize their avatar's building skills.

Lab: Prototyping eNarrative Lab

Faculty: Janet Murray

Researchers: Kyeungbum Kim, Vi Nguyen, Chris Purdy, Ziyin Zhang

Want to teach an AI a trick?

Spend a few minutes with Explainable AI, the system where you can teach an AI a simple task and help it get smarter. Pick your skill to show off and then give the artificially intelligent agent an explanation of why you did that task. Volunteers can have their "DNA" baked into the thinking process of the AI agent. The goal is to help the AI explain its actions when it needs to give a non-expert response to a human inquiring why it made a certain decision.

Lab: Entertainment Intelligence Lab

Faculty: Mark Riedl

Researchers: Upol Ehsan, Brent Harrison, Pradyumna Tambwekar, Cheng Hann Gan, Jiahong Sun

Learn piano, or Braille, without even paying attention

Passive Haptic Learning gloves teach the "muscle memory" of how to play piano melodies or Braille without the learner's active attention. These gloves can also help wearers recover sensation in their hands after a traumatic event, such as a partial spinal cord injury.

Passive Haptic Learning gloves teach the "muscle memory" of how to play piano melodies or Braille without the learner's active attention. These gloves can also help wearers recover sensation in their hands after a traumatic event, such as a partial spinal cord injury.

Lab: Contextual Computing Group

Faculty: Thad Starner

Researchers: Caitlyn Seim

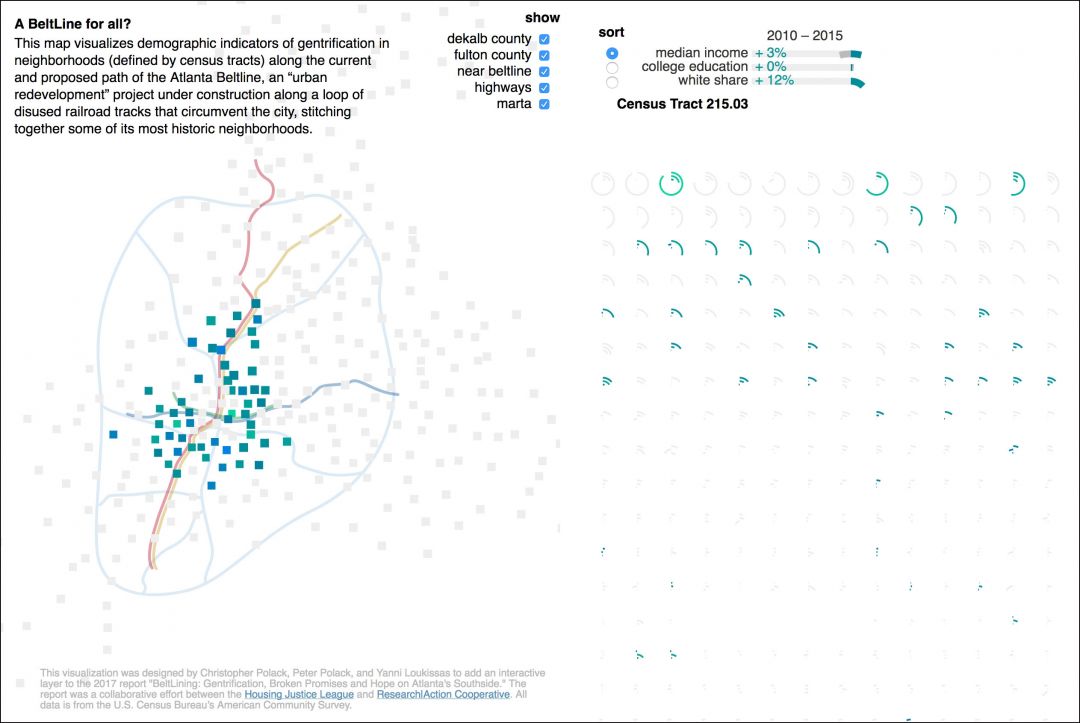

The Beltline and its impact on connecting (and separating) Atlanta's communities

The Beltline pedestrian trail is a large-scale urban revitalization project that has transformed existing infrastructure and created a significant impact in connecting Atlanta's communities. But it also highlights equity challenges in the city. This interactive map visualizes demographic indicators of gentrification in neighborhoods (defined by census tracts) along the current and proposed path of the Atlanta Beltline, which runs along a loop of disused railroad tracks that circumvent the city, stitching together some of its most historic neighborhoods.

The Beltline pedestrian trail is a large-scale urban revitalization project that has transformed existing infrastructure and created a significant impact in connecting Atlanta's communities. But it also highlights equity challenges in the city. This interactive map visualizes demographic indicators of gentrification in neighborhoods (defined by census tracts) along the current and proposed path of the Atlanta Beltline, which runs along a loop of disused railroad tracks that circumvent the city, stitching together some of its most historic neighborhoods.

Faculty: Yanni Loukissas

Look left, right, left again... then at your screen

Pedestrians are the largest group of road-users and they represent a large proportion of road casualties. Divided attention disrupts walking, making people more likely to cross a street in a risky fashion as they often look at their mobile screens. Safecrossing is a project to design an optimal solution for mobile device users while crossing streets in order to reduce casualties.

Lab: MS-HCI Project Lab

Faculty: Dr. Ellen Zegura

Researchers: Nishant Panchal

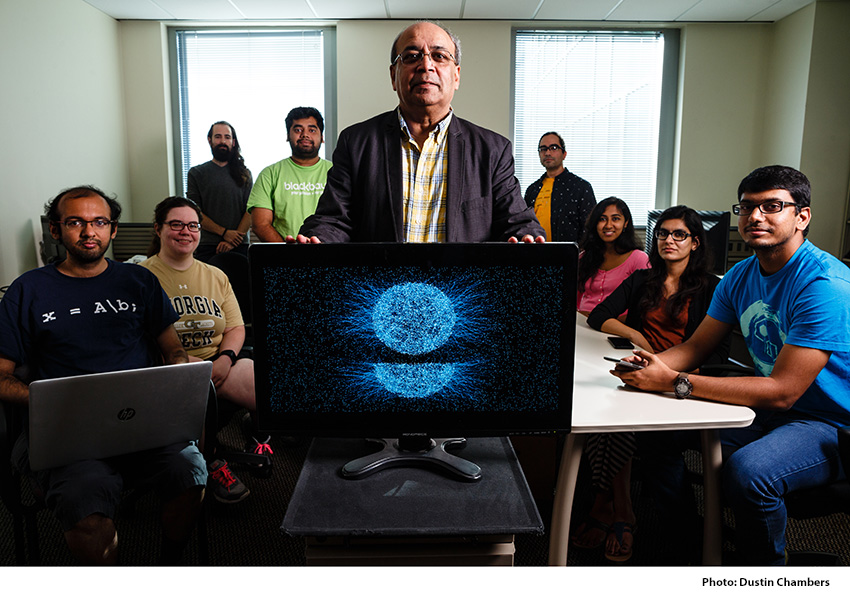

Leading the online education revolution

Virtual Teaching Assistant Jill Watson is a small but increasingly important part of Georgia Tech's tremendously successful Online Master of Science in Computer Science program. And now she has some siblings to help her scale online education even further. Jill was first introduced in an online OMSCS course, answering routine, frequently answered questions on the class forum. She did so with close to 100 percent accuracy and with an authenticity that surpassed current AI agents. To date, 25 human TAs have worked in league with Jill and more than a thousand students have interacted with her.

Lab: Design & Intelligence Laboratory

Faculty: Ashok Goel, David Joyner, Spencer Rugaber

Students: Lalith Polepeddi, Jose Delgado, Bobbie Eicher, Marissa Gonzales, Joshua Killingsworth, Sydni Peterson, Mike Lee, Kunaal Naik, Marc Marone, Roy Hong

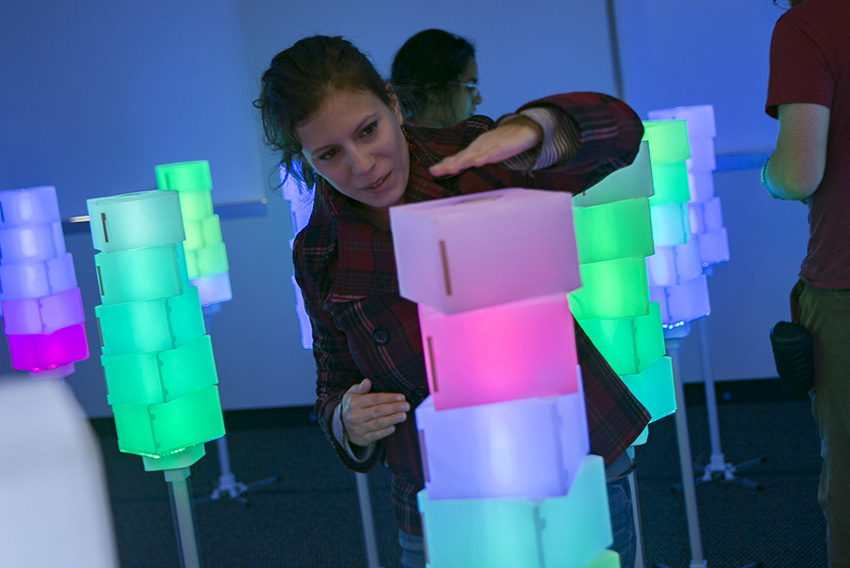

Nature-inspired tech lets the user discover different interactions

The Light Orchard is an interactive installation that invites people to walk into a grove of futuristic trees, lit with color. The trees are aware of the presence of people in their space, and can respond in many different ways. User can play different games, watch animations, and work together with different simulations, that allow them to easily collaborate, learn, and play together.

Lab: Interactive Product Design Lab

Students: James Hallam, Clement Zheng, Noah Posner, Heydn Ericson, Matthew Swarts

Instructions for Demo presenters