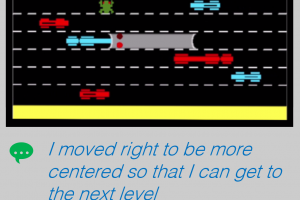

As Aritificial Intelligence (AI) becomes ubiquitous in our lives, there is a greater for them to be explainable, especially to end-users. Today, most AI systems require expert knowledge to interact with them, which is not a sustainable way to improve human-AI collaboration. How might we make AI systems more human-centered, especially for non-AI experts? In this Explainable AI (XAI) project, we introduce a technique called "rationale generation" where an AI agent (character) plays a video game (Frogger) and provides rationales in plain English to justify its actions. There are multiple contributions from this body of work. First, from a Deep Neural Network (DNN) perspective, we share how to configure the network to produce perceptibly different rationales. Second, from a human-computer interaction (HCI) perspective, we share results of two user studies that showcase how our system's outputs are plausible (when compared against baselines) and how human perceptions of these rationales affect user acceptance and trust in AI systems.

The Entertainment Intelligence Lab focuses on computational approaches to creating engaging and entertaining experiences. Some of the problem domains they work on include, computer games, storytelling, interactive digital worlds, adaptive media and procedural content generation. They expressly focus on computationally "hard" problems that require automation, just-in-time generation, and scalability of personalized experiences.